LLMs in Production: The Governance Gap Between Demo and Deployment

The demo worked brilliantly. The pilot impressed stakeholders. Now someone's asking when you can roll it out to production.

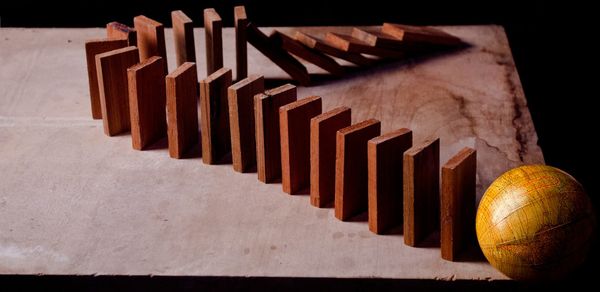

This is where most AI initiatives stall, not because the technology failed, but because nobody planned for what production actually requires. The gap between demo and deployment is where organisations suffer real losses: data breaches, financial exposure, regulatory penalties, and reputational damage that takes years to repair. Most teams dramatically underestimate what's involved.

I've spent over two years working on LLM integration projects involving prompt engineering, compliance frameworks, technical architecture, and the often underrecognized work of preparing AI for production deployment. In every case, the demos were impressive. The governance, compliance, and operational requirements revealed gaps that weren't acceptable risks. More recently, I've built custom MCP servers that give Claude direct filesystem access across my development environment, improving my productivity. The gains are real. So, is my understanding correct: most organisations aren't ready for what production actually demands?

The Inconvenient Truth About LLM Variability

Here's what makes AI governance genuinely hard: LLMs are non-deterministic by design. Same prompt. Same model. Different outputs.

This isn't a bug to be fixed; it's fundamental to how these systems work. The "intelligence" that makes LLMs useful for complex reasoning is inseparable from their unpredictability. Give Claude direct filesystem access across my development environment; improved it, but variability is tunable, not binary. Temperature settings constrain randomness. Structured outputs enforce response formats. Function calling bounds behaviour to defined actions. The question isn't "how do we eliminate variability?" It's "how do we constrain it to acceptable bounds for our specific use case?"

A marketing team generating content ideas might welcome high variability. A financial services firm making customer-facing decisions needs deterministic paths with human oversight at critical junctures. Same technology, radically different governance requirements.

The Moving Target Problem

Here's something that catches teams out: your AI system's behaviour can change without any deployment on your part.

When OpenAI updates GPT-4, when Anthropic releases a new version of Claude, or when your cloud provider migrates you to a newer model variant, your carefully tuned prompts may behave differently. Outputs that passed testing might fail in production. Edge cases you'd handled start appearing again.

investment in GPU infrastructure

This isn't a temporary growing pain. It's the permanent operational reality of building on foundation models you don't control.

Practical mitigations:

- Pin to specific model versions wherever your provider allows it

- Maintain regression test suites that detect behaviour changes

- Build abstraction layers that let you swap providers when needed

- Version control your prompts with the same rigour as application code

- Budget for prompt re-engineering with every major model update

The teams treating prompt engineering as a one-time setup cost are the ones scrambling when their production system starts behaving unexpectedly.

The Testing Problem Nobody Has Solved

Here's an uncomfortable truth: you can't test LLM prompts the way you test traditional software.

With conventional code, you define inputs and expected outputs. If the function returns what you expect, the test passes. Deterministic. Repeatable. Well understood.

LLMs don't work that way. The same prompt with the same input can produce different outputs. That's not a bug—it's the feature that makes them useful. But it makes traditional testing approaches fundamentally inadequate.

The variability compounds.

You're not just dealing with LLM output variability. You're dealing with input variability, too. Real users don't send clean, predictable requests. They send typos, ambiguous phrasing, edge cases you never anticipated, and occasionally deliberate attempts to break your system. Multiply input variability by output variability, and your test matrix explodes.

Automation is harder than it looks.

You can't write a unit test that says "assert output equals X" because the output won't equal X; it will be semantically similar to X, sometimes. How do you automate verification of "good enough"? You end up building AI-based evaluation systems, which introduces another layer of variability and complexity.

Manual testing doesn't scale.

Human evaluation is more reliable but also more expensive and slower. For a production system handling thousands of requests, you can't manually review everything. You're sampling. And sampling means accepting that some failures will reach users.

What's the acceptable success rate?

This is where most projects stall. Traditional software has clear pass/fail criteria. With LLMs, you're dealing with probabilistic outcomes. Is 95% accuracy acceptable? 99%? It depends entirely on what happens in the failure cases—and getting compliance sign-off on "it works most of the time" is a conversation most regulated industries aren't ready to have.

There's no clean answer here. The organisations making progress are the ones that are honest about these limitations, rather than pretending they've solved them.

The Deployment Spectrum

Most conversations I have about AI deployment frame it as binary: cloud APIs or self-hosted. Reality offers more options, each with distinct trade-offs.

Consumer SaaS (ChatGPT, Claude.ai)

Fastest adoption path. Lowest barrier. Highest data residency concerns. Your prompts, context, and data flow through third-party infrastructure with varying retention policies. For anything touching sensitive data, this is typically a non-starter.

Enterprise API Tiers

OpenAI and Anthropic offer enterprise agreements with contractual commitments: your data isn't used for training, retention is configurable, and security certifications are in place. Better than consumer tiers, but data still leaves your perimeter, and you're dependent on their infrastructure availability.

Cloud Provider AI Services (Azure OpenAI, AWS Bedrock)

This is where most regulated enterprises land. Microsoft's Azure OpenAI runs GPT models within your Azure tenant. AWS Bedrock offers Claude within your AWS environment. Data residency is controllable. Audit trails integrate with your existing security tooling. You inherit your cloud compliance posture.

The catches: capacity constraints and rate limits have been inconsistent. Regional availability varies. You're still dependent on a third party for model updates. And "isolated environment" marketing can obscure shared infrastructure realities at certain layers.

Self-Hosted Open Source

Llama, Mistral, Qwen, and others can run entirely on your infrastructure. Complete data sovereignty. No external inference dependencies.

The trade-offs are significant: reduced capability relative to state-of-the-art models, substantial investment in GPU infrastructure, operational complexity for updates and fine-tuning, and a scarcity of ML operations talent. This makes sense for specific, bounded use cases where regulatory requirements are absolute, not as a general-purpose alternative.

The Pragmatic Answer: Hybrid

Most mature AI deployments are hybrid: cloud APIs for development and low-sensitivity workloads; enterprise cloud services for production with customer data; and potentially self-hosted for specific high-sensitivity functions.

This requires clear data classification and routing logic. Which brings us to governance.

What Production-Grade Governance Actually Requires

Most AI governance frameworks I've reviewed are compliance theatre. Policy documents that satisfy auditors but don't technically constrain system behaviour.

Production-grade governance requires technical controls, not just policies.

Input validation and prompt injection defence. If your system accepts user input that gets incorporated into prompts, you're exposed to injection attacks. This isn't theoretical—it's actively exploited. How are inputs sanitised? How are system prompts protected? How do you detect and log injection attempts?

Output guardrails and circuit breakers. What happens when the model produces something unexpected? What actions can it take autonomously versus what require human approval? How do you handle uncertainty?

Comprehensive audit trails. For regulated industries, you need to answer "why did the system do X?" with evidence. This means logging all inputs, outputs, and intermediate reasoning. It means version-controlled prompts and configurations. It means evaluation datasets that test for consistent behaviour.

Monitoring beyond uptime. Traditional APM doesn't capture what matters for AI systems. You need to track output quality metrics, consistency over time, and drift from expected behaviour. Tools like Langfuse, Arize, and Weights & Biases are building this capability, but many organisations are still flying blind.

The Maturity Test

Before pushing AI to production, ask yourself:

- Can you explain to a regulator exactly what the system did and why?

- Can you reproduce the system's behaviour for a specific historical input?

- Can you detect when the system's behaviour has drifted from expected?

- Can you roll back to a known-good state when something goes wrong?

- Can you prove that the data never left the approved boundaries?

If you can't answer yes to all of these, you're not ready for production, regardless of how impressive the demo was.

The organisations successfully deploying AI at scale aren't the ones with the most sophisticated models. They're the ones with the most mature governance infrastructure.

The technology is ready. The question is whether your organisation is.

And if you're considering agentic AI, systems that don't just respond but take autonomous actions, understand that everything I've described here compounds. Variability multiplies across decision chains. Audit trails become exponentially more complex. Reproducibility becomes nearly impossible. The governance gap for agentic systems isn't incrementally harder than prompt-based integration. It's a different category of problem entirely.

Get the basics right first.

What's your experience with LLM operations in production? I'm particularly interested in what's working and what isn't. The vendor content consists entirely of success stories, but the reality is more instructive.

Chris Brown is a fractional CTO and enterprise architect with 28 years of experience across insurance, emergency services, and enterprise software. He works with insurers, SaaS companies, and investors through The Build Paradox.

To discuss how he can help your organisation 👉 www.buildparadox.com